ch1.intro

Ch 1. Introduction

Deep Learning

-

Deep Learning Fundamentals:

- Unlike traditional programming with predefined rules, deep learning learns patterns from examples.

- Intelligence is often conflated with self-awareness, but self-awareness is not necessary for AI to perform complex tasks.

- Edsger W. Dijkstra compared the debate over machine intelligence to asking whether submarines can swim. 0

- Deep learning trains deep neural networks using large datasets to approximate complex functions.

-

The Deep Learning Revolution:

- Traditional machine learning relied on feature engineering, where human-defined transformations made data more suitable for algorithms.

- Example: Identifying handwritten digits by defining edge filters and counting enclosed loops.

- Deep learning automates feature extraction from raw data, refining its own representations during training.

- Handcrafted features are still useful but are often outperformed by deep learning’s automated feature learning.

- Traditional machine learning relied on feature engineering, where human-defined transformations made data more suitable for algorithms.

-

Comparison of Traditional ML vs. Deep Learning:

- Traditional ML: Features are manually designed, and results depend on their quality.

- Deep Learning: The model extracts hierarchical features autonomously, optimizing its own performance.

-

Requirements for Deep Learning Success:

- Ability to ingest and process data.

- Definition of a deep learning model.

- Training process to learn useful representations and achieve desired outputs.

-

Training Process in Deep Learning:

- A criterion function evaluates the discrepancy between model predictions and actual data.

- Training iteratively adjusts the model to minimize this discrepancy.

- The goal is to achieve low error rates, even on new, unseen data.

PyTorch for Deep Learning

-

Overview:

- PyTorch is a Python library designed for deep learning, offering flexibility and a user-friendly syntax.

- Initially popular in research, it has grown into a widely used deep learning tool across various applications.

- Its Pythonic nature makes it approachable for beginners while remaining powerful for professionals.

-

Key Features of PyTorch:

- Provides Tensors, a core data structure similar to NumPy arrays, enabling mathematical operations.

- Supports GPU acceleration, significantly speeding up computations compared to CPUs.

- Includes optimization tools for deep learning, making model training more efficient.

- Designed for expressivity, allowing complex models to be implemented with minimal complexity.

- Well-suited for scientific computing, beyond just deep learning applications.

Overview of How PyTorch Supports Deep Learning Projects

Core Structure of PyTorch

- Primarily a Python library, but much of its underlying code is written in C++ and CUDA for performance.

- Supports C++ execution for deploying models in production environments.

- The Python API is the main interface for model development, training, and inference.

Key PyTorch Components

- Tensors (

torch.Tensor)- Multi-dimensional arrays similar to NumPy arrays.

- Can run on both CPU and GPU, with easy switching.

- Autograd (

torch.autograd)- Enables automatic differentiation for training deep learning models.

- Tracks operations on tensors and computes derivatives for optimization.

- Neural Network Module (

torch.nn)- Contains building blocks for deep learning models (e.g., layers, activation functions, loss functions).

- Optimizers (

torch.optim)- Provides optimization algorithms (e.g., SGD, Adam) to train models.

- Data Handling (

torch.utils.data)Datasetclass: Converts raw data into PyTorch-compatible tensors.DataLoaderclass: Loads and batches data efficiently, supporting parallel processing.

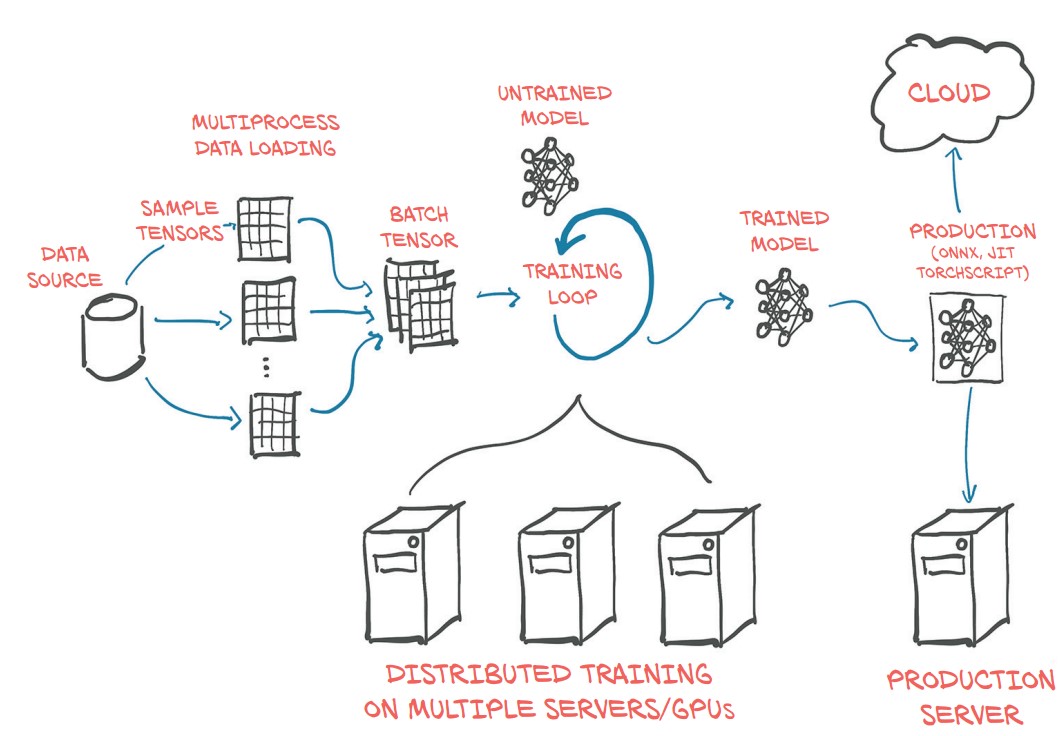

Deep Learning Workflow in PyTorch

- Data Preparation

- Data is loaded from storage and converted into tensors.

DatasetandDataLoaderhandle data transformation and batching.

- Model Definition

- Built using

torch.nncomponents like fully connected layers and convolutions.

- Built using

- Training Process

- A training loop iterates over the dataset using

forloops. - Loss functions from

torch.nncompare predictions with targets. - Autograd computes gradients automatically.

- Optimizers from

torch.optimadjust model parameters. - Can be scaled to multi-GPU or distributed computing using

torch.nn.parallel.DistributedDataParallel.

- A training loop iterates over the dataset using

- Deployment

- The trained model can be exported and used in different environments.

- Deployment options include:

- Running the model on a server or cloud platform.

- Embedding the model in a larger application or mobile device.

- Exporting with ONNX for compatibility with different runtimes.

- TorchScript compilation for optimized execution outside Python.

Production Capabilities

- Eager Execution (default): PyTorch operations execute immediately in Python.

- TorchScript: Allows ahead-of-time compilation, enabling model execution in C++ or mobile environments.

- ONNX Support: Exports models for interoperability with different AI frameworks.